Unless you’re a fan of dark or shady patterns, you probably struggle occasionally with integrity in your design practice: balancing stakeholder wishes against user needs, for example, or guiding users to hero paths while also granting them freedom to explore.

Recently, I worked on redesigning a mobile timebanking app, which helps neighbors share services and build supportive relationships. The public-good aspect of the project was appealing; the app’s values of community, trust, and support kept us focused on meeting users’ needs and being honest in our design. So of course we wanted to show users only information that was literally, concretely true. But what if a slight deviation from the truth would make users happier and more efficient in achieving their goals? What if fudging an interface helped reassure and speed the user along her way?

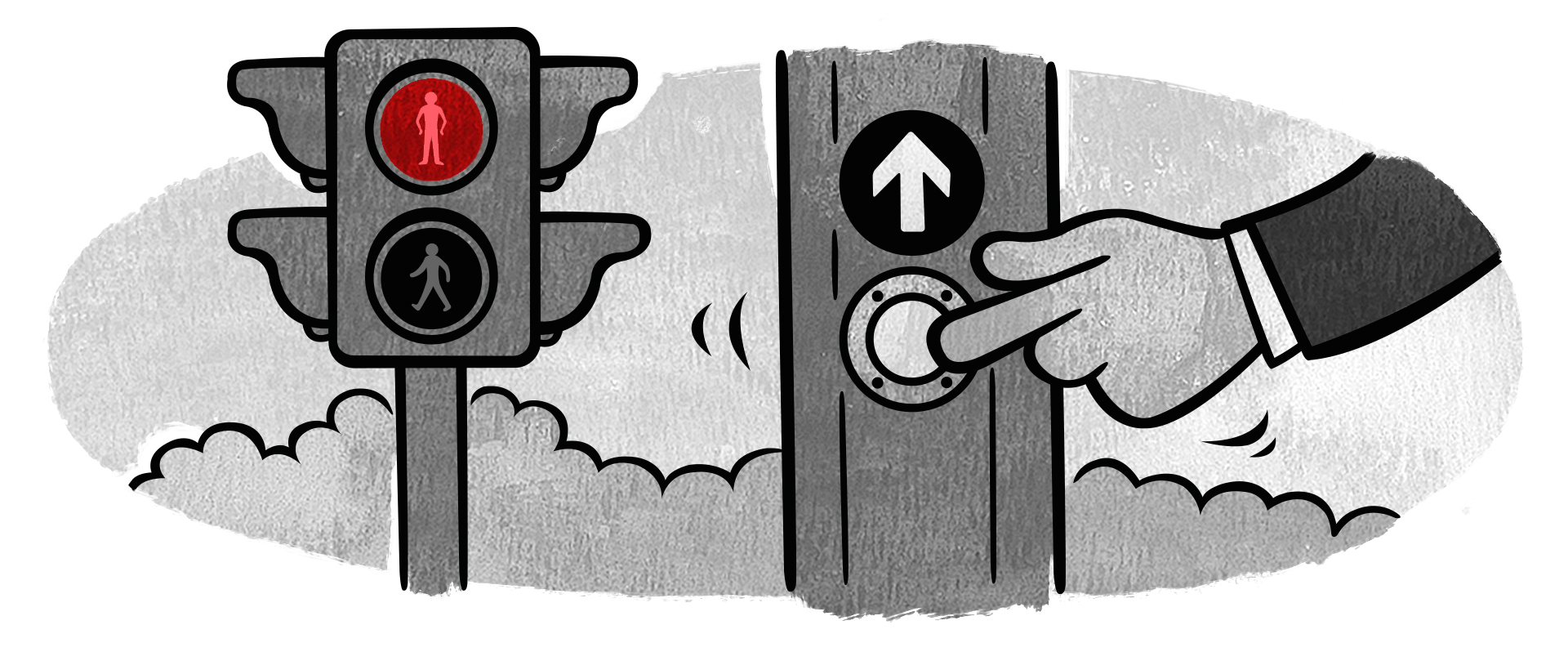

This isn’t an uncommon issue. You’ve probably run into “Close Door” buttons that don’t really close the elevator, or sneaky progress bars that fill at an arbitrary rate—these false affordances and placebo buttons are everywhere, and might make life seem a bit easier. But is this ethical design? And can we build a framework for working with false affordances and designing with integrity?

What’s the hubbub?#section2

Timebanks are local, virtual exchanges in which neighbors can post requests for and offers of service in exchange for “timedollars,” a non-fungible alternative currency based on an hour of service. For example, if I do an hour of sewing for you, I can turn around and “spend” that one timedollar I earned on having someone mow my lawn for an hour. Granted, I can’t sew and don’t have a lawn, but you get the idea.

By enabling neighbors to connect and help each other, timebanks create community in what was just a location. Through mobile timebanking, people feel more connected with their neighbors and supported in their daily lives—in fact, that’s the value proposition that guided our project.

With teams recruited from PARC, Pennsylvania State University, and Carnegie Mellon University, our goal was to redesign the existing timebanking mobile app to be more usable and to connect users more easily through context-aware and matching technologies.

We started by evaluating issues with the existing app (mapping the IA, noting dead ends and redundancies, auditing the content) and analyzing the data on user actions that seemed most problematic. For instance, offers and requests for timebanking services were, in the existing app, placed in multilevel, hierarchical category lists—like Craigslist, but many layers deep.

Our analyses showed us two interesting things: first, that the service requested most often was a ride (such as to the doctor or for groceries; the user population was often older and less physically mobile); and second, that many postings by users to offer or request rides were abandoned at some point in the process.

We speculated that perhaps the timely nature of ride needs discouraged users from posting a request to a static list; perhaps drivers wouldn’t think of diving down into a static list to say, “Hey, I commute from near X to Y at about this time a few days a week—need a lift?” So we incorporated walk-throughs of the old app into semi-structured interviews we were already conducting in our research on peer-to-peer sharing motivations; participants’ actions and responses supported our speculations.

With that in mind, we proposed and started prototyping a new feature, TransportShare, at the top level of the app. We hoped that its presence on the app’s home screen would encourage use, and its similarity to popular ride-requesting apps would ease users into the experience.

We created a list of parameters the user would have to specify: starting/pickup point, ending/drop-off point, pickup time, “fudge factor” (how flexible this time is), one-way/roundtrip, and a text field for any special needs or considerations. Then we built prototypes, moving from sketches to low-fidelity mockups to higher-fidelity, interactive prototypes using Sketch and Invision.

The choices we make#section3

Our ethical dilemma emerged as we designed the ride-request process. After the user specifies “Start” and “End” points for a ride, the app shows a summary screen with these points, the time of the ride, and a few options.

Since you’re all crackerjack designers, you’ve noticed that there is no line connecting the Start and End points, the way there is in other apps that plot a route, such as Google or Apple Maps. This was intentional. Our user research showed that people offering rides were often running other errands or may need to make detours. Since we could not anticipate these needs, we could not guarantee a specific route; showing a route would be, to some high degree of probability, wrong. And—particularly in an app that hinged on community values and honesty—that felt deceptive.

Being truthful to the nth degree is the ethical choice, right? We wouldn’t want to display anything that might not be 100 percent accurate and deceive the user, right? Doing so would be bad, right? Right?

Oh, I was wrong. So, so wrong.

And lo, came…a test#section4

At this point, we had solid research questions we wouldn’t have had at an earlier stage and clean, clickable prototypes in Invision we could present to participants. With no budget for testing, I found prospective participants at a Meetup designed to let people share and test their projects and selected the ones who had used some form of peer-to-peer app. (We are planning subsequent usability tests with more senior participants, specifically for readability and accessibility.)

I conducted the tests with one user at a time, first briefing them on the nature of timebanks (you’ve lived through that already), and setting the scenario that they are in need of a neighbor to drive them to a nearby location. They were given latitude in choosing if this would be a ride for now or later, and one-way or roundtrip. Thinking out loud was encouraged, of course.

As they stepped through the task, I measured both the time spent per screen (or subtask) and the emotional response (gauged by facial expression and tone of responses).

| Subtask | Average | Outlier | Emotion |

|---|---|---|---|

| 1: Set request time | 11s | 12s | |

| 2: Set one-way/round-trip | 10s | 17s | |

| 3: Pick start | 7.5s | 11s | |

| 4: Pick destination | 6s | 8s | |

| 5: Confirm request | 9.5s | 14s | |

| 6: Review request | 14.8s | 18s |

The users tended to move easily through the entire task until they hit Subtask 6 (reviewing the request at the summary screen), and I saw there was a problem.

Participants visibly hesitated; they said things like “ummm…”; their fingers and eyes traced back and forth over the map, roughly between the tagged Start and End points. When I asked open-ended questions such as, “What are you seeing?” and “What did you expect to see?” the participants said they were expecting to see route lines between the Start and the End.

In debrief sessions, participants said they knew that any route they might have seen on the summary screen wouldn’t be the “real” route, that drivers may take different paths at their own discretion. But the lack of lines connecting the Start and End points disconcerted them and caused hesitation and unease.

Users were accustomed to seeing lines connecting the end points of their trip. I could see this in the time it took them to interact with that screen, in their faces, and in their think-alouds. Some thought they couldn’t complete the task or that the app wasn’t finished with its job; others reported a lower level of trust in the app. We’d made an assumption—grounded in honesty—about what users wanted to see, and discovered that it did not work for them.

The retesting#section5

With these results in mind, I decided to run a smaller usability test with the same protocol and screens, with one change: a line connecting the Start and End points.

This time, the participants said they knew the drivers might not take that route, but it didn’t matter—route lines were what they expected to see. (Perhaps this is an indication they may not trust Google or Apple Maps pathfinding, but we’re not at that level of cynicism, not yet.)

| Subtask | Average | Outlier | Emotion |

|---|---|---|---|

| 1: Set request time | 7.8s | 13s | |

| 2: Set one-way/roundtrip | 9.8s | 11s | |

| 3: Pick start | 6.5s | 8s | |

| 4: Pick destination | 5.3 | 6s | |

| 5: Confirm request | 6.8s | 8s | |

| 6: Review request | 8s | 9s |

I graphed out the average user time per screen and averaged emotional response (observed: positive, negative, meh). I think it’s not unreasonable to see low time per interaction plus positive emotion as a good sign, while high time per interaction plus negative emotion as a sign that I needed to go back and look at a problem.

The difference is just a line—a line participants are either explicitly or implicitly aware isn’t a true representation of reality. But the participants found the line comforting, to the point where, without it, usability took a serious hit.

Toward a heuristic#section6

So when you get in an elevator, do you press the “Close Door” button? If so, what prompted you? Did you press it once and wait, or keep pressing until the door actually closed? How did that make you feel, the pressing, or even just the existence, of said button?

Of course, we now know that the “Close Door” button does exactly nothing. It’s a false affordance, or placebo button.

Which brings us back to the ethical question. Should I design in elements that do not technically mislead, but reassure and increase comfort and effectiveness of the product for the user? Does intention prevent this from being a dark pattern?

Remember, dark patterns are dark not because they aren’t effective—they’re very effective—but because they put the user in positions of acting against their own interests. Kim Goodwin speaks of well-designed services and products acting as considerate humans should act; perhaps sometimes a considerate human tells white lies (I’m not judging your relationships, by the way). But then again, sometimes white lies are a seed that turns into an intractable problem or a bad habit. Is there a way to build a framework so that we can judge when such a design decision is ethical, instead of just effective?

I originally wrote about this only to ask a question and open a conversation. As a result, the heuristics below are in the early stages. I welcome feedback and discussion.

- Match between affordance and real world. The affordance (false or placebo) should relate directly to the situation the user is in, rather than lead the user away to a new context.

- Provide positive emotional value. The designed affordance should reassure and increase comfort, not create anxiety. Lulling into a stupor is going too far, though.

- Provide relief from anxiety or tension. If the product cannot provide positive emotional value, the affordance should serve to reduce or resolve any additional anxiety.

- Increase the user’s effectiveness. The affordance should help improve efficiency by reducing steps, distractions, and confusion.

- Provide actionable intelligence. The affordance should help the user know what is going on and where to go next.

- Add context. The affordance should offer more signal, not introduce noise.

- Move the user toward their desired outcome. The affordance should help the user make an informed decision, process data, or otherwise proceed towards their goal. Note that business goals are not the same as user goals.

- Resolve potential conflict. An affordance should help the user decide between choices that might otherwise be confusing or misleading.

These are not mutually exclusive, nor are they a checklist. Checking off the entire list won’t guarantee that all of your ethical obligations are met, but I hope that meeting most or all of them will weed out design that lacks integrity.

One unresolved question I’d like to raise for debate is the representation of the false affordance or placebo. The “Close Door” button wouldn’t work if labeled “This Doesn’t Close the Door but Press it Anyway,” but in my case we do want to make the user aware that the route line is not the route the ride will necessarily take. How to balance this? When does it become deception?

In addition, I can recommend Dan Lockton’s Design with Intent toolkit, licensed under the Creative Commons 3.0 standard. And Cennydd Bowles has written and spoken on the larger issues of ethical design; if this is a topic of interest (and indeed, you’ve read many words on the topic to get to this point), it’s worth diving into his site.

I hope to keep thinking about and refining these heuristics with additional feedback from designers like you. Together, let’s build tools to help us design with integrity.

Fantastic article. With the user research figures, the average times look very quanty. I’ve got a feeling it was qual research though. How many users did you interview? And if you used think-aloud, didn’t the user’s choice to verbalise/not verbalise have a big effect on their time-taken?

This is an interesting article. It think it is ok to use the “route line” as long as it is communicated to the parties involved that routes may very. Have you continued working on this project? I would like to learn more about follow up tests and outcomes. Maybe interviewing groups or people that do rideshares could be of interest. With a taxi or Uber, since it is a paid service the time and expectation of getting to a place are pretty fixed. For a free service, there could be a lot of variables.

First thought was to simply dash the line, indicating that the route is tentative.

Second thought was that if the user was expecting to see a line connecting the start and endpoints, why not draw a line and arrowhead from the start point directly to the endpoint?

Thanks for reading! And the comments.

@Harry: I take your last sentence to mean that if the user weren’t thinking out loud, that might reduce the time value? That’s a good point, and if we were to go into more “how do you justify this” detail, something we probably should quantify better, but participants roughly said about the same amount of stuff; the delta was observed confusion. Perhaps I could have made that more clear in the article, so thanks for the feedback.

@Will: Also thanks. Yes, we’re still working on it, though the nature of the teams and that it’s a part-time project for us all means things like academic calendars and CHI paper submissions can put this on delay at times. And good point: we are planning on putting a small “actual route may vary” on the screen (as opposed to, you know, me saying it). Since this is volunteer (actual sharing), not a service, the people offering rides probably have their own needs in terms of routing.

That reminded me of something my waves professor have told us in the faculty of Engineering, Cairo univ.:

When he helped inventing the microchip antennas, there was no more need for that long piece of plastic/antenna to be part of any mobile phones.. But the marketing team claimed that the users would think the phone is the reason why they are getting a weak or no signal in some places while it’s a network coverage problem, not a phone problem!

So, they decided to sell two groups of models at the same time:

1. A group with no long antennas at all.

2. And a group with a fake antennas.

The ones with no antennas struggled a lot in the market for some time as people were more comfortable with the long antennas (they didn’t know they were fake antennas) thinking they’ll get better network signal.

I think it’s all related to the common conceptual model at that time. So, timing of the design is a very important factor in deciding to be honest or having to fake something to make the users more comfortable reaching their goals… without deceiving them and making them seeking other goals they wouldn’t have wanted if they realized everything well enough.

Is it ethical for doctors to use the placebo effect?

http://www.webmd.com/pain-management/what-is-the-placebo-effect?page=2

They use it to help some cases (like depression) which have no real cure by giving them blank pills and telling them that these pills are the most advanced and have 100% healing rates… And it usually works as their brains and bodies behave differently and kind of heal itself!

I believe the designer’s “intention” is the primary factor in determining whether the design is ethical or not.

Muhammad, it’s unethical for a doctor to give a placebo when a more effective treatment is available (as is the case with depression – antidepressants aren’t all that effective on average, but they still beat placebo by enough that it would be unethical to substitute placebo for them without telling the patient). It would also be unethical to claim a 100% cure rate, since that would damage the trust of the much more than 0% of patients who would not recover. Having good intentions while doing harm does not make an action ethical.

It seems to me that either of Michael’s suggestions would be as clear/honest as an “actual route may vary” note, and also more noticeable to the user.

It will be interesting to know if the users are still feeling the same way about the line once they got a ride and went on a different route than shown on the map. Knowing something is after all not the same as experiencing it.

And what happens if you take the map out of the equation? No map, no line, no being sneaky, but another way of representing that same data.

Guys, you find me somewhat puzzled.

On the one hand this is a great article and I’m still baffled by all the research behind the scenes. On the other hand, to me the “dark pattern” theme is really not about integrity at all, but more about “legible intent”.

Let me clarify. So called “dark patterns” are not dark per se. It’s the designers’s intent that’s bent on doing either good or evil.

Architects and designers who create product or urban furniture are confronted with the harshness of reality on a daily basis – kids choke on pen caps, skaters fall from handrails and break their neck.

What is called “dark pattern” here, can also be called “foolproofness” in the brick and mortar world. And yes, foolproofness can also be used to sell you stuff you don’t want – candies at child-height right next where you queue up at the checkout desk, anyone?

IMHO beyond all the tools and techniques, ergonomics and cognitive psychology, what is expected from a designer is their capacity to declare their intent and inform it into their production.

Which eventually loops back neatly to what I love about this article: the adamantly clear 8 heuristics Dan describes in the end are the foundational groundwork through which you can make your intent cristal clear to the end-user.

Thanks for more comments!

@Fanny: Yes, it will be interesting — though given the nature of this production, it’ll still be a while before we’ll have the app used in the wild. It’s an interesting idea, using no map, but that does seem to be how many people plan out their errands/transit needs (we’re offering the ability either to enter an address w/predictive text or use a map to specify start/end points… and I know, this bog-standard).

@Lalao: Thanks for those insights! So you’re saying it’s about transparency of the design intent — I can sure see that few dark patterns would work as well if the product explicitly said “muah hah ha, you’ll end up in a perpetual purchase contract but PLEASE PROCEED”. But when does disclosure become a burden to the user? We all, in real life, have to delegate a lot of this process of trust and confidence decision-making (“I’m sure NPR won’t screw me over, so I’ll sign up”). Thanks for the kind words; I’m glad the heuristics make sense outside of my own head!

Because you seem to quiet knowledgable and effective with product research, I have an odd question pertaining to pre-product-launch-research (yes I made that up) specifically speaking about the copy or wordage used in the product.

For example, a landing page solely created to convert users into signing up for a service (as it should):

Assuming the product is ‘foolproof’ itself, do you Dan (or anyone else reading this), find it more effective to launch a product with little to none research made to what exact copy should be written to ‘sell’ the product, and the next few weeks use that live product to research user experience, utilize web analytics and pivot accordingly?

OR do you suggest delaying launch to research the perfect copy and launch a few weeks later? Launch then pivot or perfect then launch? that is the question.

There is an obvious answer to this when it comes to the interface and design. But when copy can be adjusted quite effortlessly even late in the game, it makes me wonder…

Any thoughts on this dumb and quiet useless question?

Dan,

I’ve studied the matter of literal accuracy myself in the past, so the question resonated with me. I’ve determined that it’s often the case that a better service is provided to the user by being “familiar” than any pretense at being “perfectly accurate.” In my own work, I notice the phenomenon most often when wording explanations. The accurate explanation would typically read like legalese, verbal spaghetti that does more harm than good. You are trying to design a user interface, not write a contract for momentous obligations. By employing “the familiar” the user can “go directly and quickly to a place in their mind” and then perhaps meander around until they arrive at the exact mentality of your interface.

It’s a fascinating, but somewhat off-topic question why the thin blue line is such a crutch… beyond its habitual appearance. I can only come up with its providing of “helpful redundancy.” By seeing a line, for instance with 3 or 4 turns, we have reinforcement—in 0.25 seconds—that we haven’t errantly asked to travel between cities.

But more importantly I’d like to suggest that your ascribing of being ‘100 percent accurate’ by adding the blue line is highly subjective. To use argument by hyperbole, I could tell you that nothing (!) in the interface is accurate. The driver won’t make perfect 90-degree turns; the start time won’t be precisely 2:00 AM; the trip will not be the 2-inch duration shown on a phone; and the car certainly won’t see a blue stripe guiding the way. It is all abstraction. But fortunately humans are pretty good at un-abstracting. In other words, I don’t think there’s the slightest deception in the line, let alone poor ethics (which would require intent added on).

-Jack

So glad to see these great questions and thoughts coming in. Thanks!

@Skylar, that sounds like more of a business process question? I’m not sure I quite understand, but I’ll say I’m not a business person, so add a lot of salt to anything I say on this. But I will add that rewriting copy for a small section of a web page or an onboarding screen for an app (this last is indeed non-trivial, as http://www.useronboard.com and my own experience attest to), but if it’s a matter of copy, try getting a cross-team group together in a room for an afternoon to brainstorm around the copy; keep in mind who the users are and what they’ll want to do. That part should not take weeks.

@Jack: Ha, thanks for the reassurance! And indeed, familiarity is a design element, and argument by hyperbole can be useful. I do hope these heuristics, as they evolve and are refined, can also be of use in checking design decisions that might potentially be… less savory.

Great article and an interesting case.

To the point of the article, truthfulness is a key to customer service. This is a service app. Being untruthful about the route could cause huge headaches for the company (on the backend of the ride). To be a leader in a category is to change minds. The expectations of the users have to be changed. That’s not something done easily and it won’t help in the long run to tell little white lies to customers.

I have the same questions as Fanny. Is the momentary good feeling at the “reservation” confirmation worth the long-term disappointment of the ride not going as expected. With the quality of free and not for profit services in America, can we expect that people will be cool when it doesn’t act like what they know–or have heard about–already (e.g., Uber, Lyft, etc.)? Knowing the expectations for the service overall is good information to gather, if it hasn’t been already.

My thought is that an ambiguous service should show an ambiguous route while still being truthful. Let the user decide if it’s a service he wants. Users should have enough information to make an honest choice for or against the service. The map line and disclaimer technically do that, but is that the most honest and forthcoming way to present it?

As an example, people are content with airlines to only see the names of two destinations with a single line and arrow drawn between them. We all know that not every flight will go as planned, but we are content when the start and end points meet our expectations. Can that sort of non map-based, generic graphic be presented?

Thanks again for the thoughtful article.

Out of curiosity, have you considered a single “as the crow flies” line from point A to B rather than calculating and displaying a route?

Prima facie this satisfies the emotional need for a blue line connecting two points, and also satisfies the transparency issue of being upfront about the ambiguity of the actual route to be followed.

Addendum:

Later development could introduce being able to define additional points in the route to provide more granular detail of the route. In this case, if there’s a high level of precision it is because it has been provided by the user as opposed to being second-guessed by a computer.

Conceptually this then becomes more about defining flexible goals (going from a to b, possibly/preferably via c) that other people can assist with, rather than defining the means (travel along road x, turn at corner y, etc until you reach destination z).

This is an interesting article. More info from

http://www.handbagslink.com

Ok, so I really enjoyed this article. But I find something troubling. The concept of affordance seems to be built on addressing an emotional need with a patterned that’s not necessarily suited but familiar. (expectation: elevator door closing, need: control over the closing time, pattern: buttons control elevators, solution: button that closes elevator door) But would it be better to radically introduce a different pattern instead of conforming and reinforcing to an ill-suited one? (instead of a button adding a door closing timer; instead of a map at the confirmation step providing only start and end address). Are the white lies necessary only because they use an wrong pattern to solve the problem? Maybe they are needed to compensate for a mismatch between the problem and a familiar but inefficient solution.

designing is best part of website to represent in front of any client. you should design proper with white lies. thanks you

It’s this level of consideration that I admire from UX designers.

Although I don’t personally see this as an ‘ethical dilemma’ I do think I may perceive the app as slightly less trusting through a lack of consideration if I saw an incorrect route.

A combination of the suggestions from the guys in the comments above would completely reassure me. They are – Dashed line with a note to say *route may vary.

Great work, it must be have been a fun project.

Jack

UI Designer @ Blockety HTML Templates

Excellent questions and thinking. IMHO, even well-intentioned design that doesn’t reflect the truth can backfire. You never know when someone really needs to close the doors on an elevator and will rely on the design working as advertised.