As web professionals, we’re accustomed to putting out fine websites based on best practices, analytics data, competitor review, secondary research, and our own expertise. Talented as we may be, though, it can be hard to stay aggressively committed to optimizing the user experience when more powerful and immediate success indicators like client satisfaction, aesthetic appeal, and ease of development and maintenance all compete for our attention. To overcome these boundaries on the empathy that we can extend to our sites’ imagined visitors, there’s no substitute for research conducted with actual users.

Dana Chisnell rightly noted in 2009 that usability testing allows us to “make good, solid, confident decisions about design.” By putting our best guesses about what works in front of our target audience, we get to see where we were right and where we were wrong. I don’t know anybody who’s seen their work tested without being surprised about how wrong some of their best guesses were.

Like any research method, though, usability testing has its drawbacks. Most importantly, it isn’t cheap. In a classic study, you’ve got the expenses of developing the testing plan, recruiting and compensating the participants, sometimes even travel. Even remote testing, which has its limitations, can be expensive, because analysis of the testing data is still no easy feat. In fact, analysis is often the biggest component of the project. Going through all those testing videos and cataloging the qualitative data just can’t be sped up without undermining the integrity of the study.

Moreover, the effectiveness of those typically costly methods depends on an organization putting its best foot forward. Conducting usability testing on a website with “low-hanging fruit” issues, those that could have been identified some cheaper way, means wasting the extra dollars on a project that, all else being equal, won’t yield the best possible website.

Fortunately, we have other usability research methods at our disposal. The standouts, expert review and heuristic evaluation, are both easy to add to a design and development process almost regardless of budget or resource concerns. Both involve minimal costs, as compared to full-scale usability testing, with expert review in particular being scalable to almost any project. For projects with fuller budgets, both techniques can maximize the cost-benefit ratios of subsequent usability testing. In the rest of this article, I’ll take a close look at each of these two techniques, their advantages and disadvantages, and the details of how to include them in your projects.

Expert review: The ultimate in discount usability engineering#section2

In the world of usability research, expert review is known as a “discount usability engineering” method1 because of its sometimes dramatic cost-benefit ratios. Think about it this way: On a website with enough traffic, even a 1 percent increase in conversion rate is bound to outweigh the cost of one expert spending a week or two on a review. That’s not to speak of the decreased user frustration and increased satisfaction and loyalty that also tend to accompany well-implemented findings from all kinds of usability studies.

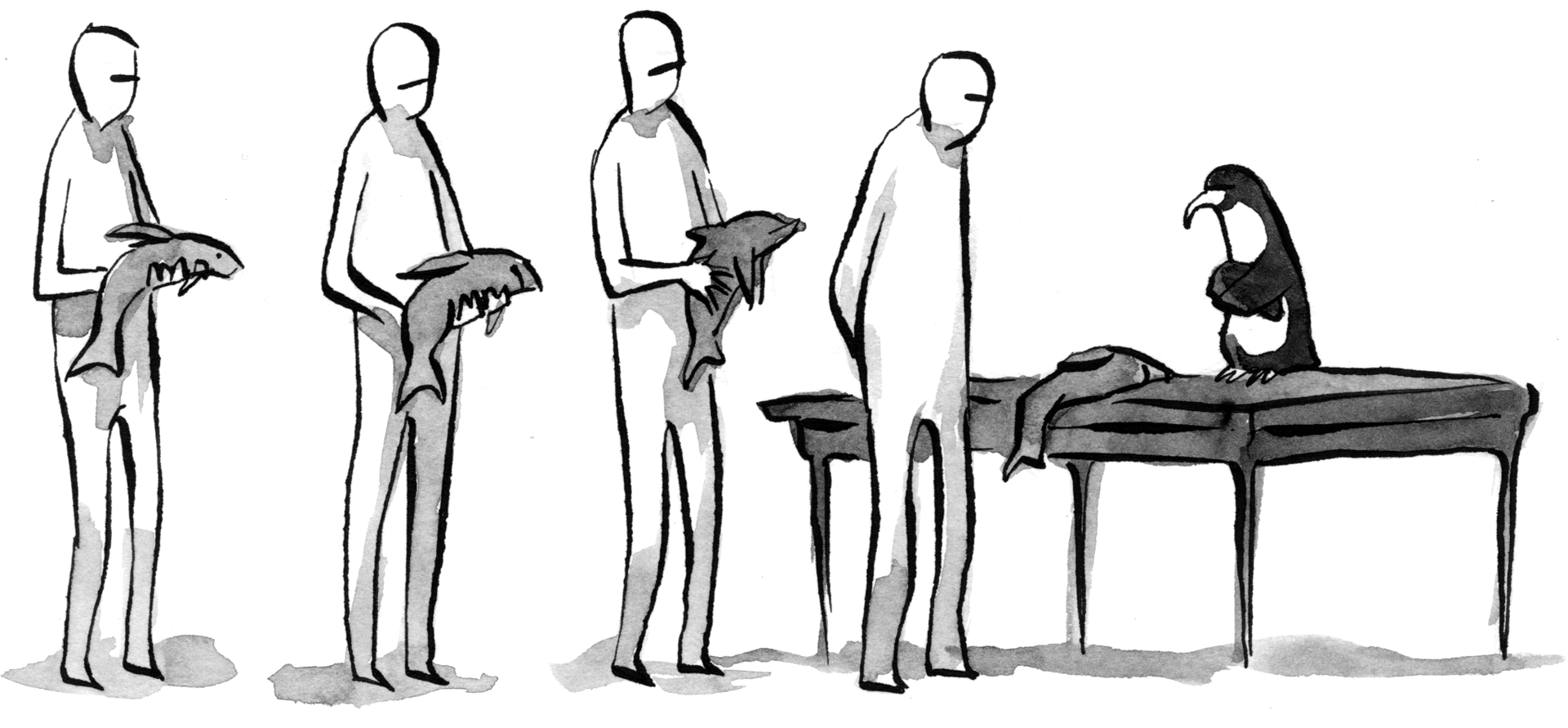

Expert review relies on the expertise and judgment of the evaluator rather than the feedback of an army of usability testing participants, as mediated, in larger firms anyway, by a brigade of analysts and account executives. Since different evaluators bring different backgrounds and subjectivities to the table, this literally single-minded approach limits the value of expert review to some extent. For this reason, projects with medium or large budgets sometimes include multiple reviewers.

Even with a single expert, though, this method’s advantages make it worthwhile on nearly any project. It’s blazing fast and perhaps the most cost-effective piece of usability engineering out there. It allows you to catch low-hanging fruit in preparation for a larger study (like usability testing) or to go after major issues on a site, whether it’s in the design stage or has been live for years. It works on projects of any size, since even a few hours of an efficient expert’s time will likely buy you substantial improvements in the areas most often affected by usability issues: conversion, satisfaction, and loyalty.

Heuristic evaluation: Where rigor meets ROI#section3

In heuristic evaluation, an evaluator assesses the conformance of a website to a predefined set of usability heuristics, or guidelines for ensuring optimal usability. Like expert review, heuristic evaluation is considered a discount usability engineering method because of its typically favorable cost-benefit ratios.2

Also like expert review, a heuristic evaluation can be undertaken with just a single evaluator, with all the advantages and risks discussed above. The difference is that the structure of a set of heuristics allows you to bring in multiple evaluators without dramatic cost increases. While it’s good to have at least one usability expert to coordinate the study, you needn’t pay for the expertise of a team, since it’s typically baked into the heuristics themselves. Luckily, the good people at Userfocus have put together a list of 247 novice-friendly guidelines, and have even put them into a nifty spreadsheet with spider charts and easy-to-follow instructions.

You’ll note that some of the heuristics in the Userfocus example cover topics like the overall appeal of the design and quality of the content. It’s worth noting that these areas fall outside the traditional purview of usability methods. Heuristic evaluation’s historical association with the goal of improving interface usability may have arisen because of the youth of web copywriting, for example, as compared to interface design. After all, research on software interface usability predates the advent of the web, let alone the study of it, by decades. It’s also a more direct precursor to the field of web usability than print design and copywriting are to their, um, digital analogs. So it makes sense that, in the early history of the web, methods like heuristic evaluation would be applied only within the usability field.

However, no principle demands such an exclusive relationship between heuristic evaluation and interface usability. In fact, heuristic evaluations that also cover things like design and content can benefit your work in those areas tremendously. Not surprisingly, many have recently attempted to generate useful heuristics for other domains (again, like copywriting). My own set of heuristics covers the following areas:

- Appearance: The appeal and effectiveness of the site’s look and feel, from major layout features to small typographical details.

- Content: The quality and strategic significance of the site’s content, including not only page copy but page metadata, PDFs and other files, and element-specific content (e.g., image descriptions).

- Interface Usability: Ease of use of the site’s interactive components, from the simplest navigation structures to the most complex forms.

- Accessibility and Technology: On a basic level, the site’s ability to adapt to diverse user needs, encompassing not only accessibility guidelines compliance but also browser compatibility and mobile- and tablet-friendliness.

Naturally, the heuristics you use should reflect your situation. If nobody in your study knows how to write, you may shy away from assessing content quality. If you haven’t got access to a tablet, you shouldn’t be considering tablet-friendliness.

It’s not enough to have a set of heuristics and a group of evaluators: The evaluators need to understand what to do with the heuristics. For that, you need some kind of rubric. Studies involving novice evaluators are best kept simple. The Userfocus format, for example, includes just a three-point rating system for severity, along with room for some notes.

Having experts as your evaluators allows you to include elements in your rubric besides severity rating and notes: the importance of each issue as perceived by the evaluator, for example, and a list of audiences affected by each one. However, there may be no reason to overcomplicate an admirably lean method. If needed, you can make sure the evaluation report covers these more nuanced topics.

Regardless of who’s working on the project, your severity rating system, which tells you something like, “How bad is it, Doc?” should never have more than three or four points. Heuristic evaluation helps you distill a rich and thorough data set into an accurate high-level view of a site’s strengths and weaknesses. The not-quite-black-and-white scoring is integral to that process. A seven-point scale, let’s say, would make it much harder to distinguish between severe, moderate, and minimal issues. (Imagine your doctor saying, “It’s not a very serious problem, but it’s also not totally fine. I’d call it Moderately Serious Plus.”)

Finding help#section4

Once you have a sense of the kind of work your project needs, it’s time to look for help. You can start within your own organization, but internal reviewers can be burdened by internal politics and too familiar not only with the design up for review but even with your house style and design patterns. Still, usability professionals within your company who aren’t assigned to the project can sometimes provide productive expert reviews.

If you choose to look externally, just ask around. Your professional and social networks are likely to come through for you. Usability professionals don’t often get the kind of reputations hotshot designers do, but if you work in any corner of this business, chances are you know somebody who knows one of us. Failing that, the surprisingly helpful usability.gov lists a few directories you can use to find services like these.

No matter where you find your consultant, you might consider these few questions:

- How much experience does the consultant have doing usability reviews? Some usability consultants focus more on the planning stage, delivering things like wireframes, personas, and information architectures. They may be rusty when it comes to reviewing existing work.

- On the other hand, does the consultant do anything other than reviews? I’ve found that my reviewing skills are kept sharp by my other tasks, which give me different perspectives on what works and what doesn’t. You may also have other needs (like content strategy or technical writing) that some consultants would be able to fill; many of us wear a few different hats.

- Do the consultant’s particular strengths appeal to you? For example, some consultants have brilliant minds for usability but write sloppy reports. If you’re going to have to use the report to get buy-in for a new design project, that might not work for you. If the report is for your eyes only, you might be able to make do.

- How much experience does the expert have in the industry or sector relevant to your project? It’s far from critical, but for very complex interfaces, a little prior knowledge of your users and their basic needs and dispositions can go a long way.

Baby steps toward a perfect world#section5

In a perfect world, our understanding of our users and how they work with our websites would mean conducting not only a lot more usability testing, but also more interviews, more ethnographic observation, more… everything. In our actual world, though, tight budgets and schedules still too often mean cutting users out of the process, abandoning our empathy for them, and relying on “best-guess design.”

But it doesn’t have to be that way. The typical ROI for discount usability engineering methods is stratospheric.3 Again, without talking to users directly, we can only come so close to perfect. But if we can’t afford usability testing, let’s at least set aside a small percentage of our budgets for methods like expert review and heuristic evaluation, fast and effective processes designed to make our best guesses that much better.

I like your article Devan and I’m pleased you’ve pointed people to the list of guidelines we use for expert reviews at Userfocus — but I don’t understand why you seem to couch this as an either-or discussion. Any sensible project manager will include time for both usability testing (the gold standard) and for expert reviews. I’m sure it’s not your intention, but people may read your article and think that expert reviews are a cheap alternative to user testing. Expert reviews are best used to achieve an extra iteration through the design loop, not as a replacement for user testing.

Thanks for the comment, David (and for the excellent set of guidelines). It’s good to know that the article seemed to you to be an either-or, because that certainly wasn’t my intention, and I’m glad to have the chance to discuss the question further.

So, let me say: You and I agree, I think, that there’s no substitute for testing with actual or potential users, that every effort should be made to involve real users in every project, and that expert review and heuristic evaluation work best when they multiply the value of a usability study rather than supplanting the study itself. I may not have been emphatic enough on these points, largely moving on from them after my first two paragraphs.

At the same time, I do see certain circumstances where involving real users just won’t work as well as one of these other measures. Perhaps a project has run out of money or is running behind against a hard launch deadline. Ten hours of an expert’s time will be cheaper and can be put into action much more quickly and effectively than even a guerilla usability study, in my experience. (Some projects also have no budget at all, as with some non-profit clients I’ve had. Personally, I’m more willing to do expert reviews _pro bono_ than I am usability testing.)

I’d also say that it’s common for usability to be a last priority. If my clients or their bosses are the wrong combination of unwilling to pay for a full-scale usability study and skeptical that guerilla studies have any value—both positions I find misguided and try to help work against—they are often nonetheless willing to enlist an expert to spend a week reviewing a site. Such a review not only improves a site or design, but in some case helps make the case for later usability testing, since many reports, if they’re honest, will have to identify some points on which there will be, again, no substitute for the input of real users.

None of these situations is ideal, of course. I wish every project brought real users into the process. On my view, though, expert reviews and heuristic analyses can be stop-gap measures in a world where usability testing just isn’t conducted as much as we’d like. While we’re fighting the fight for more of it, though, I think we might as well also help the users with less direct methods on the table here.